|

| def | __init__ (self, states_df, score_type, max_num_mtries, tabu_len=10, ess=1.0, verbose=False, vtx_to_states=None) |

| |

| def | move_approved (self, move) |

| |

| def | finish_do_move (self, move) |

| |

| def | restart (self, mtry_num) |

| |

| def | __init__ (self, states_df, score_type, max_num_mtries, ess=1.0, verbose=False, vtx_to_states=None) |

| |

| def | climb (self) |

| |

| def | do_move (self, move, score_change, do_finish=True) |

| |

| def | refresh_nx_graph (self) |

| |

| def | would_create_cycle (self, move) |

| |

| def | move_approved (self, move) |

| |

| def | finish_do_move (self, move) |

| |

| def | restart (self, mtry_num) |

| |

| def | cache_this (self, move, score_change) |

| |

| def | empty_cache (self) |

| |

| def | __init__ (self, is_quantum, states_df, vtx_to_states=None) |

| |

| def | fill_bnet_with_parents (self, vtx_to_parents) |

| |

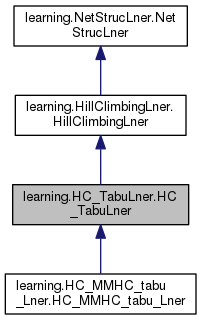

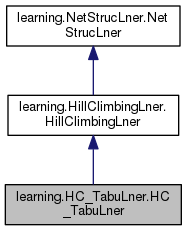

The class HC_TabuLner (Hill Climbing Tabu Learner) is a child of

HiilClimbingLner. It adds to the latter a Tabu list, which is a memory

of places that are forbidden to revisit, at least temporarily.

The idea is to keep a list of the last n moves. Then disallow any next

move that is in that list, BUT allow next moves that go downhill if no

uphill moves are currently possible and allowed. Each time a downhill

move is necessary, the starting point of that downhill move is a local

maximum. Of all local maxs encountered, the one with the highest score

is selected in the end.

Attributes

----------

is_quantum : bool

True for quantum bnets amd False for classical bnets

bnet : BayesNet

a BayesNet in which we store what is learned

states_df : pandas.DataFrame

a Pandas DataFrame with training data. column = node and row =

sample. Each row/sample gives the state of the col/node.

ord_nodes : list[DirectedNode]

a list of DirectedNode's named and in the same order as the column

labels of self.states_df.

max_num_mtries : int

maximum number of move tries

nx_graph : networkx.DiGraph

a networkx directed graph used to store arrows

score_type : str

score type, either 'LL', 'BIC, 'AIC', 'BDEU' or 'K2'

scorer : NetStrucScorer

object of NetStrucScorer class that keeps a running record of scores

verbose : bool

True for this prints a running commentary to console

vertices : list[str]

list of vertices (node names). Same as states_df.columns

vtx_to_parents : dict[str, list[str]]

dictionary mapping each vertex to a list of its parents's names

tabu_list : list[tuple[str, str, str]]

a list of the previous moves. The list's length is specified in the

constructor by means of tabu_len parameter. Every time a new move is

added to end of the tabu list, the first item of the list is removed.

best_loc_max_graph : networkx.DiGraph

best_loc_max_score : float

Every time the restart() function is called because a try yields no

moves with positive score change, we infer that a new local max has

been reached. If total score of the current loc max is higher than

best_loc_mac_score, we replace both best_loc_max_score and

best_loc_max_graph by those of the better local max.

loc_max_ctr : int

local maximum counter, counts the number of local maxs encountered.

| def learning.HC_TabuLner.HC_TabuLner.restart |

( |

|

self, |

|

|

|

mtry_num |

|

) |

| |

Takes in mtry_num, the number of the current move try, and returns (

restart_approved, mtry_num_fin) where mtry_num_fin equals the input

mtry_num. So this function does nothing to mtry_num, although it

could have. For example, in the class HC_RandomStartLner,

this function sets mtry_num to zero. Restarts are always approved

until they reach their limit given by max_num_mtries. Current

nx_graph and score is stored if its score is better than previous

best score.

Parameters

----------

mtry_num : int

Returns

-------

bool, int

Public Member Functions inherited from learning.HillClimbingLner.HillClimbingLner

Public Member Functions inherited from learning.HillClimbingLner.HillClimbingLner Public Member Functions inherited from learning.NetStrucLner.NetStrucLner

Public Member Functions inherited from learning.NetStrucLner.NetStrucLner Static Public Member Functions inherited from learning.HillClimbingLner.HillClimbingLner

Static Public Member Functions inherited from learning.HillClimbingLner.HillClimbingLner Static Public Member Functions inherited from learning.NetStrucLner.NetStrucLner

Static Public Member Functions inherited from learning.NetStrucLner.NetStrucLner Public Attributes inherited from learning.HillClimbingLner.HillClimbingLner

Public Attributes inherited from learning.HillClimbingLner.HillClimbingLner Public Attributes inherited from learning.NetStrucLner.NetStrucLner

Public Attributes inherited from learning.NetStrucLner.NetStrucLner 1.8.11

1.8.11